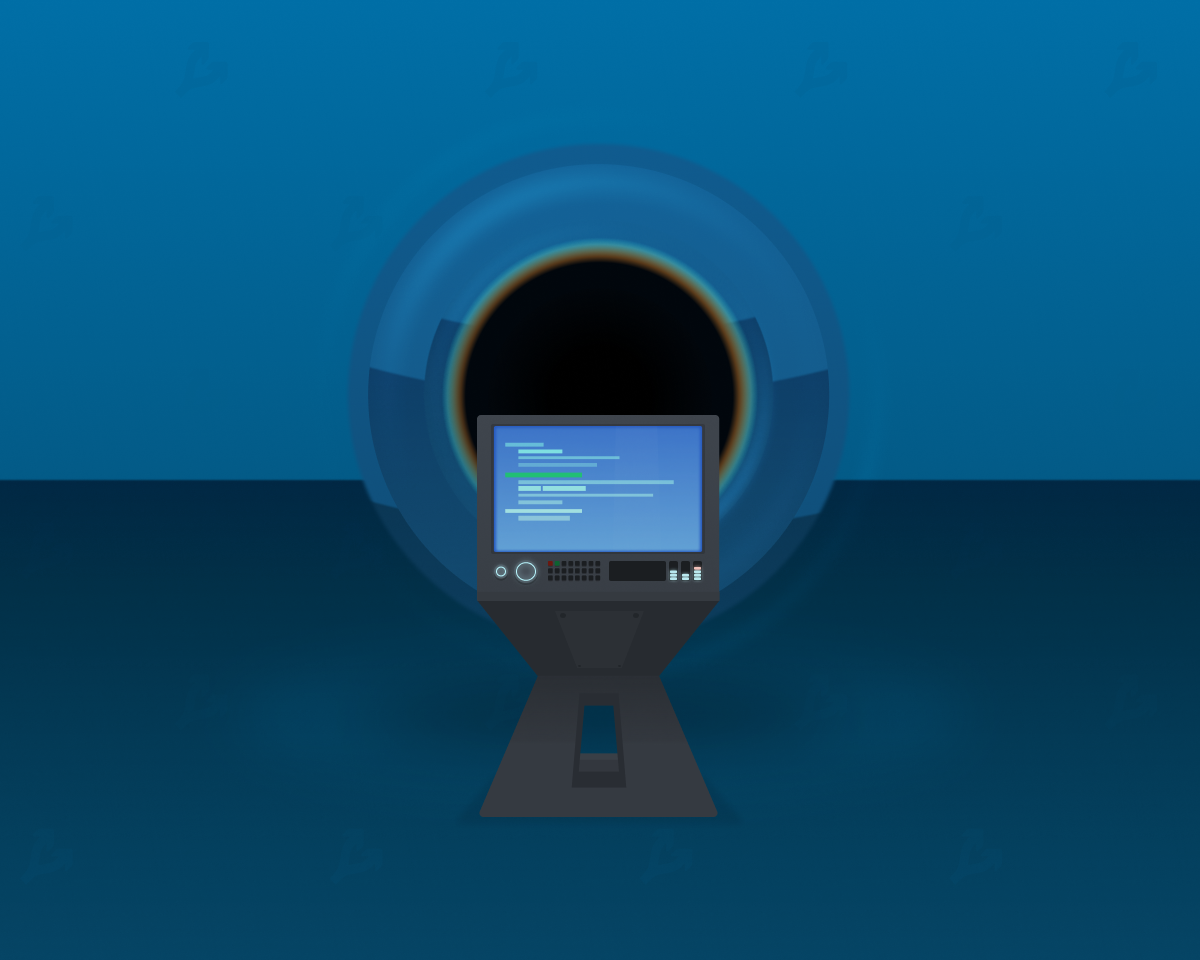

New functionality arriving in iOS 16 will enable apps to trigger real-world actions hands-free. That means users could do things like start playing music just by walking into a room or turning on an e-bike for a workout just by getting on it. Apple told developers today in a session hosted during the company’s Worldwide Developer Conference (WWDC) that these hands-free actions could also be triggered even if the iOS user isn’t actively using the app at the time.

The update, which leverages Apple’s Nearby Interaction framework, could lead to some interesting use cases where the iPhone becomes a way to interact with objects in the real world, if developers and accessory makers choose to adopt the technology.

During the session, Apple explained how apps today can connect to and exchange data with Bluetooth LE accessories even while running in the background. In iOS 16, however, apps will be able to start a Nearby Interaction session with a Bluetooth LE accessory that also supports Ultra Wideband in the background.

Related to this, Apple updated the specification for accessory manufacturers to support these new background sessions.

This paves the way for a future where the line between apps and the physical world blurs, but it remains to be seen if the third-party app and device makers choose to put the functionality to use.

The new feature is part of a broader update to Apple’s Nearby Interaction framework, which was the focus of the developer session.

Introduced at WWDC 2020 with iOS 14, this framework allows third-party app developers to tap into the U1 or Ultra Wideband (UWB) chip on iPhone 11 and later devices, Apple Watch and other third-party accessories. It’s what today powers the Precision Finding capabilities offered by Apple’s AirTag that allows iPhone users to open the “Find My” app to be guided to their AirTag’s precise location using on-screen directional arrows alongside other guidance that lets you know how far away you are from the AirTag or if the AirTag might be located on a different floor.

With iOS 16, third-party developers will be able to build apps that do much of the same thing, thanks to a new capability that will allow them to integrate ARKit — Apple’s augmented reality developer toolkit — with the Nearby Interaction framework.

This will allow developers to tap into the device’s trajectory as computed from ARKit, so their devices can also smartly guide a user to a misplaced item or another object a user may want to interact with, depending on the app’s functionality. By leveraging ARKit, developers will gain more consistent distance and directional information than if they were using Nearby Interaction alone.

The functionality doesn’t have to be only used for AirTag-like accessories manufactured by third parties, however. Apple demoed another use case where a museum could use Ultra Wideband accessories to guide visitors through its exhibits, for example.

In addition, this feature can be used to overlay directional arrows or other AR objects on top of the camera’s view of the real world as it helps to guide users to the Ultra Wideband object or accessory. Continuing the demo, Apple briefly showed how red AR bubbles could appear on the app’s screen on top of the camera view to point the way to go.

Longer term, this functionality lays the groundwork for Apple’s rumored mixed reality smart glasses, where presumably, AR-powered apps would be core to the experience.

The updated functionality is rolling out to beta testers of the iOS 16 software update which will reach the general public later this year.

English (US) ·

English (US) ·