Watermarking an image to mark is one’s own is something that has value across countless domains, but these days it’s more difficult than just adding a logo in the corner. Steg.AI lets creators embed a nearly invisible watermark using deep learning, defying the usual “resize and resave” countermeasures.

Ownership of digital assets has had a complex few years, what with NFTs and AI generation shaking up what was a fairly low-intensity field before. If you really need to prove the provenance of a piece of media, there have been ways of encoding that data into images or audio, but these tend to be easily defeated by trivial changes like saving the PNG as a JPEG. More robust watermarks tend to be visible or audible, like a plainly visible pattern or code on the image.

An invisible watermark that can easily be applied, just as easily detected, and which is robust against transformation and re-encoding is something many a creator would take advantage of. IP theft, whether intentional or accidental, is rife online and the ability to say “look, I can prove I made this” — or that an AI made it — is increasingly vital.

Steg.AI has been working on a deep learning approach to this problem for years, as evidenced by this 2019 CVPR paper and the receipt of both Phase I and II SBIR government grants. Co-founders (and co-authors) Eric Wengrowski and Kristin Dana worked for years before that in academic research; Dana was Wengrowski’s PhD advisor.

While Wengrowski noted that though they have made numerous advances since 2019, the paper does show the general shape of their approach.

“Imagine a generative AI company creates an image and Steg watermarks it before delivering it to the end user,” he wrote in an email to TechCrunch. “The end user might post the AI-generated image on social media. Copies of the deployed image will still contain the Steg.AI watermark, even if the image is resized, compressed, screenshotted, or has its traditional metadata deleted. Steg.AI watermarks are so robust that they can be scanned from an electronic display or printout using an iPhone camera.”

Although they understandably did not want to provide the exact details of the process, it works more or less like this: instead of having a static watermark that must be awkwardly layered over a piece of media, the company has a matched pair of machine learning models that customize the watermark to the image. The encoding algorithm identifies the best places to modify the image in such a way that people won’t perceive it, but that the decoding algorithm can pick out easily — since it uses the same process, it knows where to look.

The company described it as a bit like an invisible and largely immutable QR code, but would not say how much data can actually be embedded in a piece of media. If it really is anything like a QR code, it can have a kilobyte or three, which doesn’t sound like a lot, but is enough for a URL, hash, and other plaintext data. Multiple-page documents or frames in a video could have unique codes, multiplying this amount. But this is just my speculation.

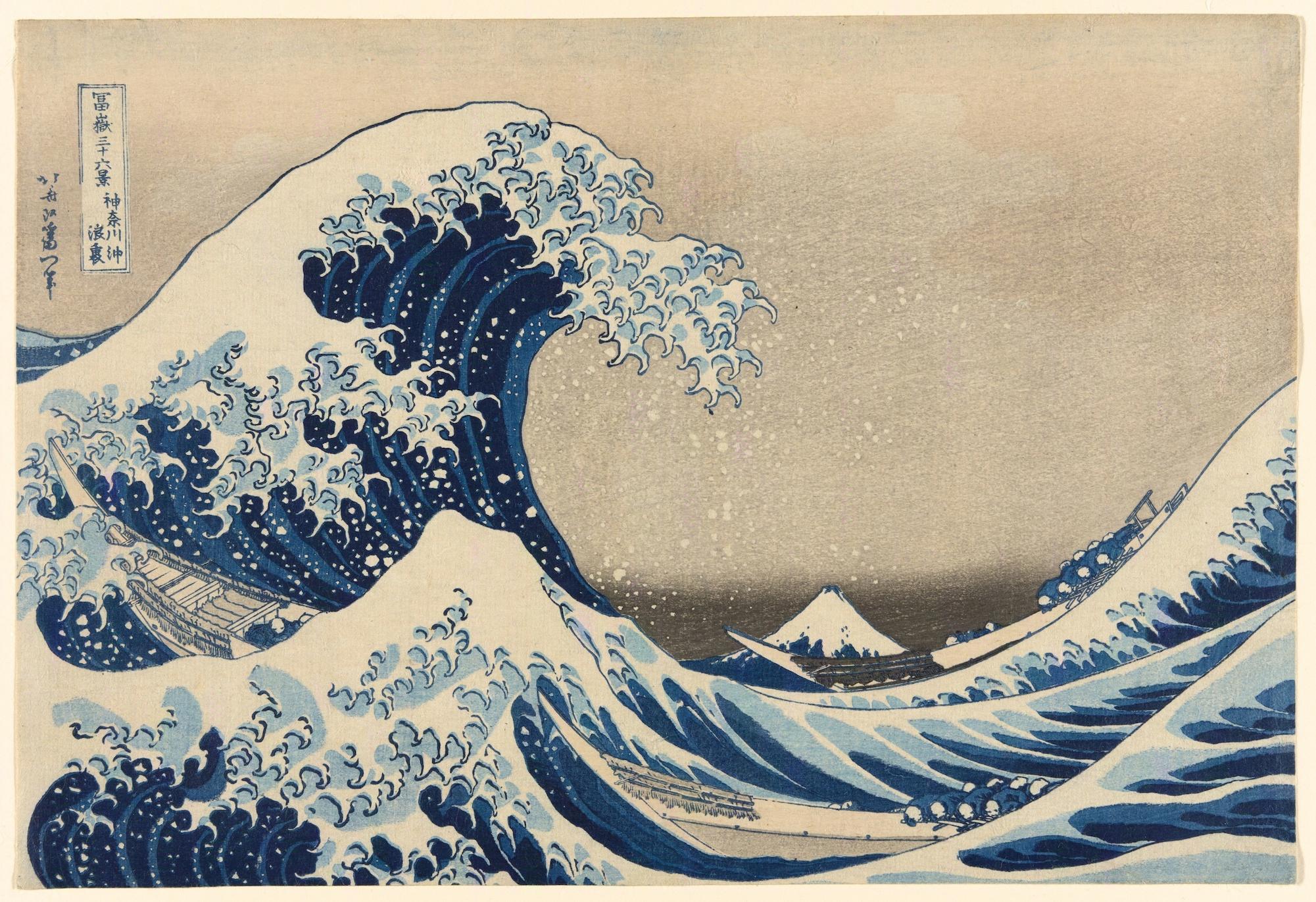

Steg.AI provided multiple images with watermarks for me to inspect, some of which you can see embedded here. I was also provided (and asked not to share) the matching pre-watermark images; while on close inspection some perturbations were visible, if I didn’t know to look for them I likely would have missed them, or written them off as ordinary JPEG artifacts.

Yes, this one is watermarked.

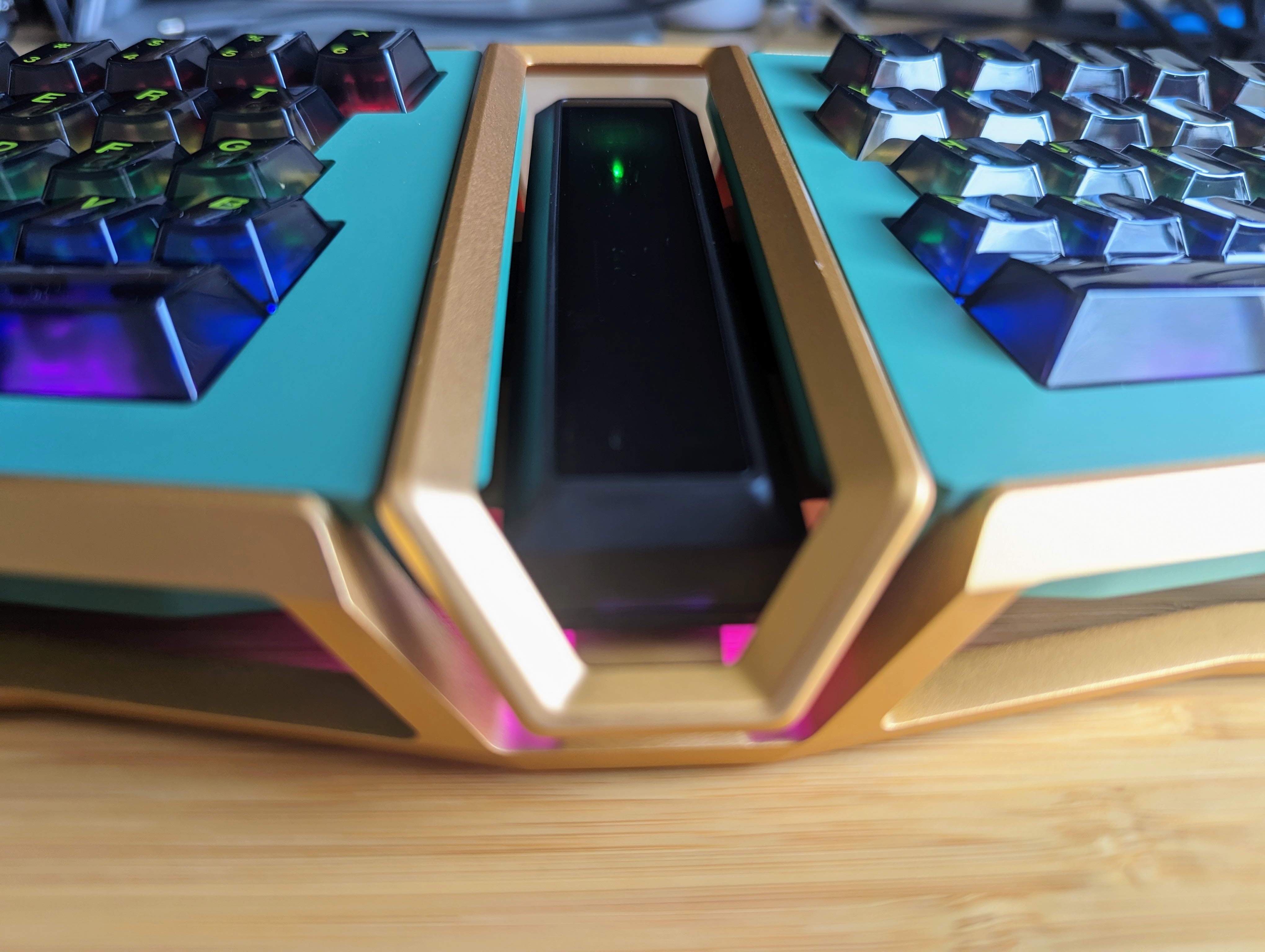

Here’s another, of Hokusai’s most famous work:

Image Credits: Hokusai / The Art Institute of Chicago

You can imagine how such a subtle mark might be useful for a stock photography provider, a creator posting their images on Instagram, a movie studio distributing pre-release copies of a feature, or a company looking to mark its confidential documents. And these are all use cases Steg.AI is looking at.

It wasn’t a home run from the start. Early on, after talking with potential customers, “we realized that a lot of our initial product ideas were bad,” recalled Wengrowski. But they found that robustness, a key differentiator of their approach, was definitely valuable, and since then have found traction among “companies where there is strong consumer appetite for leaked information,” such as consumer electronics brands.

“We’ve really been surprised by the breath of customers who see deep value in our products,” he wrote. Their approach is to provide enterprise-level SaaS integrations, for instance with a digital asset management platform — that way no one has to say watermark that before sending it out; all media is marked and tracked as part of the normal handling process.

Concept illustration of a Steg.AI app verifying an image.

An image could be traced back to its source, and changes made along the way could conceivably be detected as well. Or alternatively, the app or API could provide a confidence level that the image has not been manipulated — something many an editorial photography manager would appreciate.

This type of thing has the potential to become an industry standard — both because they want it and because it may in the future be required. AI companies just recently agreed to pursue research around watermarking AI content, and something like this would be a useful stopgap while a deeper method of detecting generated media is considered.

Steg.AI has gotten this far with NSF grants and angel investment totaling $1.2 million, but just announced a $5 million A round led by Paladin Capital Group, with participation from Washington Square Angels, the NYU Innovation Venture Fund, and angel investors, Alexander Lavin, Eli Adler, Brian Early and Chen-Ping Yu.

English (US) ·

English (US) ·